Learn by product

Most recent audio production tutorials

Browse all the most recent tutorials and articles.

Introducing Neutron 5

Featuring three brand-new plugins, a faster and smarter Mix Assistant, mid/side and transient/sustain channel modes, and more, Neutron 5 delivers world-class quality, whether it’s your first mix or your next big hit.

Popular videos

Learn how to mix and master music from top artists and audio engineers using iZotope products.

Top mixing and mastering trends of 2024

How to mix kick and bass: fundamentals for a cohesive sound

How to use Ozone 11 | AI-powered mastering software

How to mix music 101

New to mixing? Start here. Learn the fundamentals of audio mixing, and discover resources to mix like a pro.

Audio mixing

Dive deeper into audio mixing with the resources below.

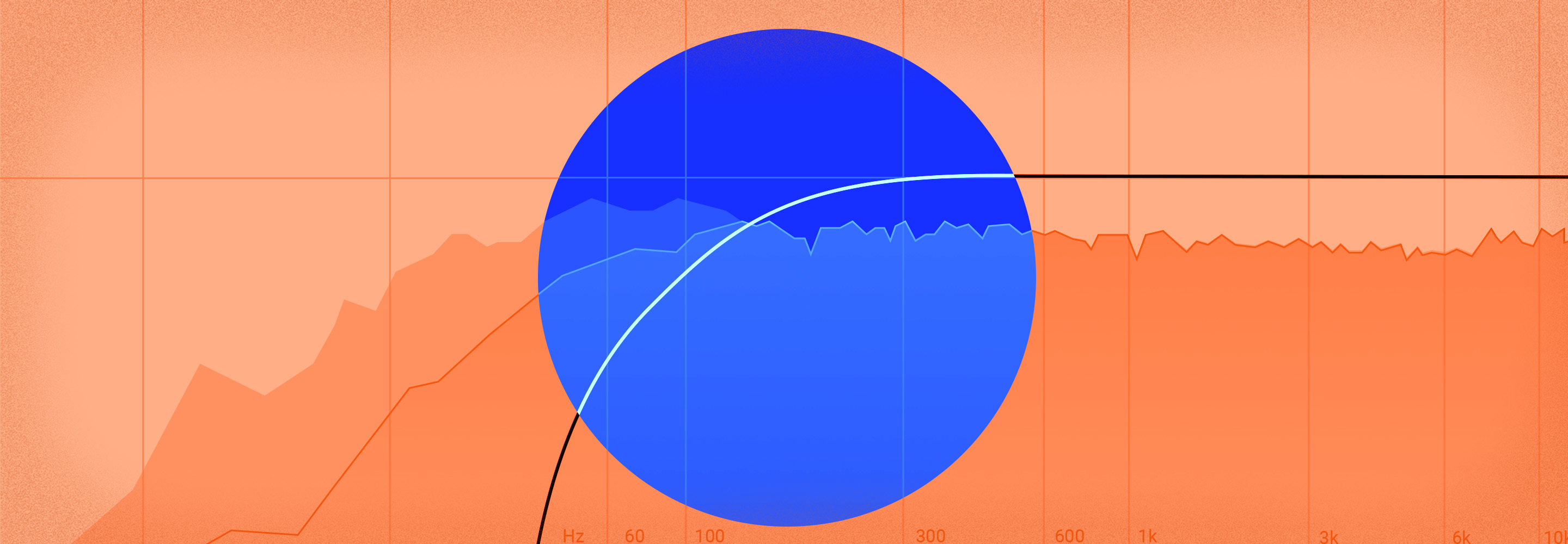

Audio mastering 101

Mastering is the final stage of audio production. Learn the basics of what mastering is in this guide.

Audio mastering

Dive deeper into audio mastering with the resources below.

Are You Listening?

Watch the latest season of the hit mixing and mastering series.

10 Critical Mastering Mistakes You Should Avoid

How to Hear like a Pro Mastering Engineer

10 Essential Tips for Mastering at Home

Learn by product

Find articles, tutorials, and videos based on products.

Learn by topic

Find articles, tutorials, and videos based on topics.

Audio Mixing Audio Mastering Music Production Audio Repair Free Resources Mix Bus Processing Dialogue Editing Sound Design Songwriting Reverb Beat Making Dynamics in Mastering Dynamics in Mixing EQ in Mastering EQ in Mixing Reference Tracks Stereo Imaging Tonal Balance Loudness Audio Effects Mixing Levels Mixing Workflow Mixing Guitar Mixing Bass Mixing Vocals Mixing Drums Home Recording News Podcasting