What is tonal balance in mixing and mastering?

Discover what tonal balance is and how you can achieve good tonal balance in mixing and mastering using tools and methods outlined in this tutorial.

“Tonal balance” is one of those terms that gets thrown around a lot by mixing and mastering engineers as if they’re talking about something as commonplace as water, but for the uninitiated, it can leave them scratching their heads. What is tonal balance, what makes it good or bad, and how does one go about achieving good tonal balance?

In this piece, we’ll not only talk you through what tonal balance is, but also show you some tools and techniques you can use while mixing or mastering to achieve great tonal balance for your songs. So, let’s roll up our sleeves and dive in!

In this piece you’ll learn:

- What tonal balance is, and what makes it good or bad

- How to measure and adjust tonal balance during mixing

- The role of tonal balance in mastering

Pro Tip: Follow along and utilize tonal balance in your mix with a copy of iZotope’s

Music Production Suite 7

What is tonal balance?

In the simplest sense, tonal balance refers to the distribution of energy across the range of audible frequencies—about 20 Hz to 20 kHz—usually in the context of a full mix. Very broadly speaking, you could think of this as the balance between bass, midrange, and treble, but of course, we can—and do—get more granular. Thus, when we talk about tonal balance we’re really talking about how the different frequencies and frequency ranges in a mix balance against each other.

What makes good tonal balance? The answer here is less clear-cut for several reasons. First and foremost, what might be a good tonal balance for one song could be completely inappropriate for another. Second, we’re talking about art which inherently has a subjective element to it. Thus, it is entirely fair to say that judging the quality of a song’s tonal balance is not only largely personal, but also highly context-dependent.

However, we can still make some general observations about tonal balance in a broad sense. First, if we look at the tonal balance for an entire song consisting of a typical mix of drums, bass, voice, and some additional instrumentation, it is not uncommon to have a peak somewhere below 100 Hz with a gentle slope down as we move to higher and higher frequencies. Second, within the context of a given genre, it is not abnormal to have some subtle variation from this typical shape with a reasonably defined maximum deviation above and below the average.

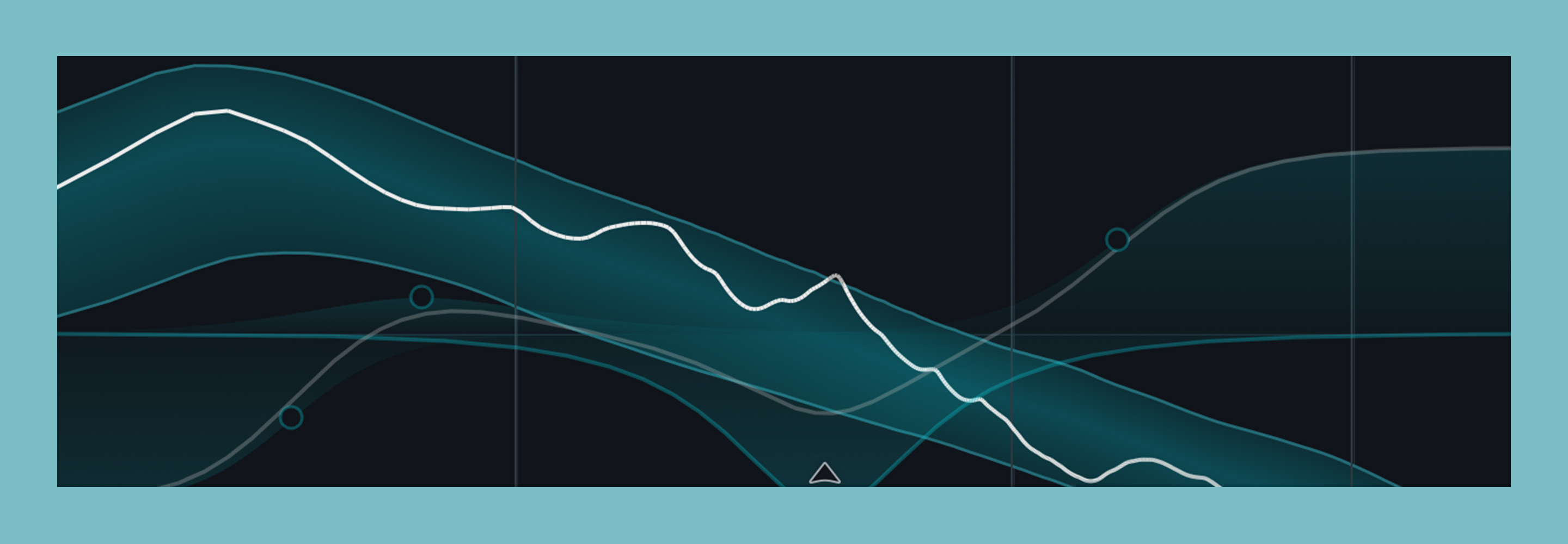

And in fact, this type of shape and deviation is exactly what Tonal Balance Control shows us. The target curve selection menu allows us to select from 12 common genres, with each selection being derived from machine learning analysis that used thousands of professional masters from a vast range of commercially released songs in that style. Further, you can create your own custom target curves from either an individual reference track or better yet, an entire folder of reference tracks.

Next up, let’s look at how we might use this powerful tool during mixing.

The examples below are using a previous version of Tonal Balance Control. For the latest features, including a new Target Library, Extra Meters, and more, explore the Tonal Balance Control page.

How to use tonal balance in mixing

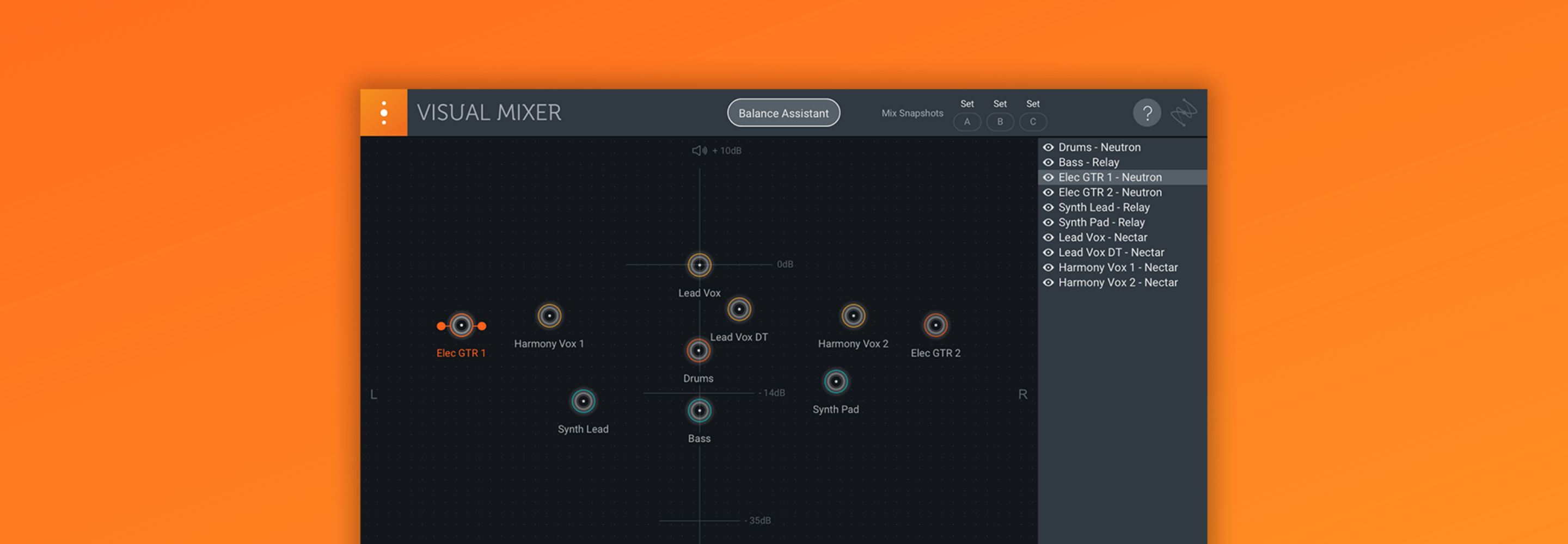

Before we start looking at our tonal balance, it’s important to keep a couple of factors in mind. First, Tonal Balance Control is designed to work on a full mix, so won’t provide a ton of useful information on individual instruments. Second, it’s a good idea to have at least a rough static mix set up before you dive in too far. Whether you do this by ear or by using Neutron’s Assistant View is totally up to you.

Once you have a basic mix dialed in, it’s a great idea to drop an instance of Neutron's Equalizer on each subgroup bus—don’t forget to name your instances accordingly—along with an instance of Insight and Tonal Balance Control on the final insert slots of your master bus. Not only will this allow us to see our overall tonal balance, but it will also provide the ability to see the individual frequency contribution of each subgroup and adjust its EQ, all from within Insight and Tonal Balance Control.

Analyzing and adjusting your mix

Next up, navigate to a portion of your song that has the most full-on arrangement—probably something like the final chorus—hit play, and take a look at what’s going on in Tonal Balance Control. In our example mix, shown below, we can see a couple of frequency ranges we might like to address with EQ moves. We’ve got some energy buildup in the very low and high areas of the frequency spectrum, along with a bit of a scoop in the high mids.

Viewing the tonal balance of our rough mix

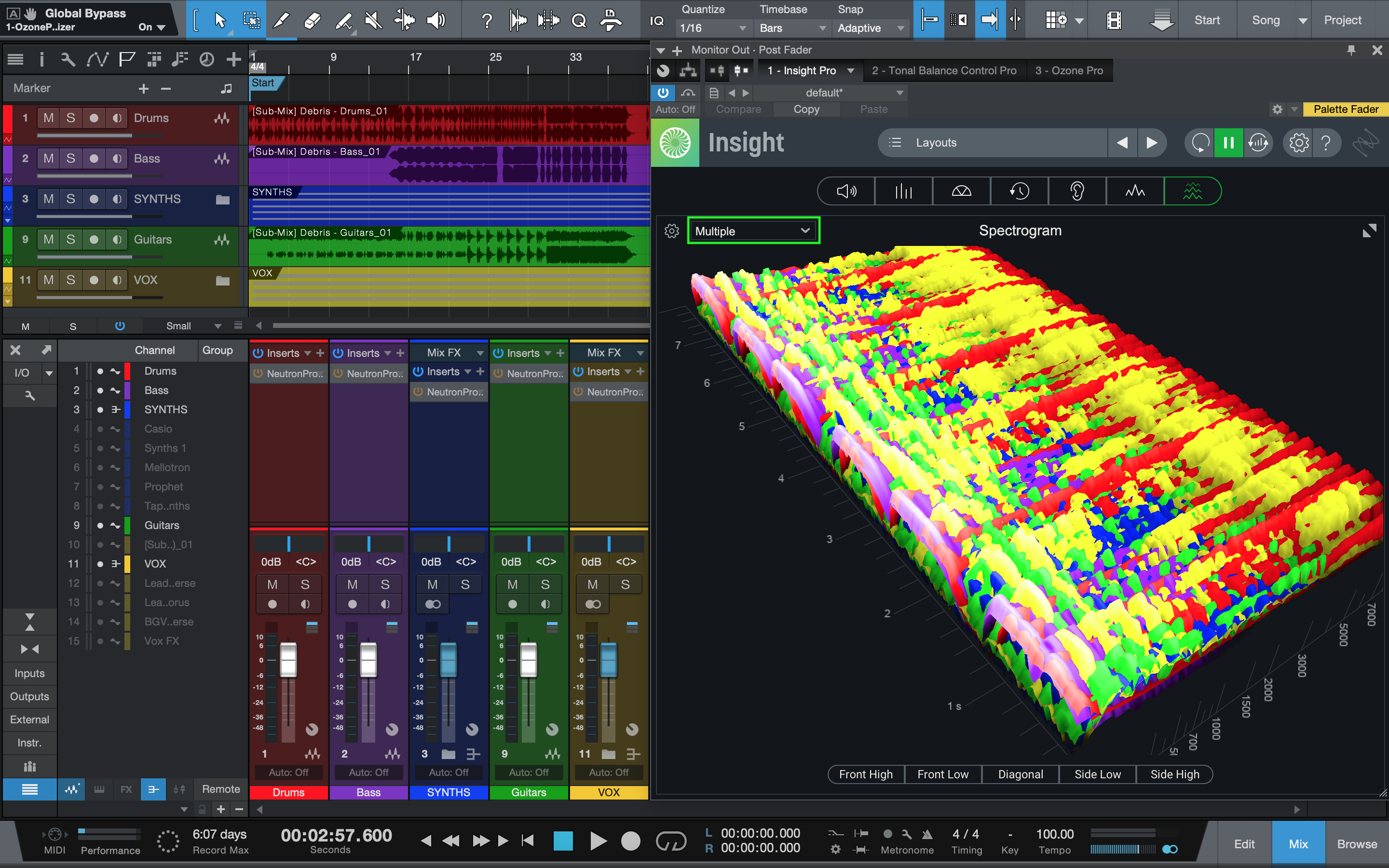

To see if we can pinpoint what’s contributing to these frequency ranges, let’s switch over to Insight, and maximize the spectrogram panel. By clicking on the “Select a source” dropdown, we can choose to view the outputs of our Neutron Equalizer instances—and we can even assign the same colors we used on our mixer channels. Then, when we press play again, we can see how everything fits together and make some choices about where to EQ for a better tonal balance.

Viewing frequency contribution by subgroup in Insight

For instance, we can see that there’s actually some low end clutter coming from our guitars and vocals, that the fundamental of the bass is largely overpowering the kick drum, that the vocals and synths are both fairly scooped in the high mids, and that it’s predominantly the vocal—and partially the drums—that’s contributing to the excess energy in the high end. Armed with this information, we can pop back into Tonal Balance control and begin making some adjustments.

At the bottom of the Tonal Balance Control window, you’ll see a “Select a source” dropdown which will allow you to select any of the Neutron Equalizers you’ve added to your subgroup buses. Once selected, you can adjust the EQ while still viewing the tonal balance curve.

Alternatively, turning something up or down can be as useful as EQing. You can do this by adjusting the output gain of the corresponding tool as shown at the top right of the EQ readout in Tonal Balance Control.

Adjusting vocal EQ within Tonal Balance Control

As you’re doing this, it’s important to keep a few things in mind:

- First and foremost, use your ears. This visual information can act as a powerful roadmap to help you find the elements and areas that may need attention, but at the end of the day, it’s all about what you’re hearing.

- Don’t aim for ruler-flat. It’s natural to have some variation in your tonal balance curve, and that’s part of what gives a song its sonic signature.

- Think about whether you’re adjusting individual or multiple sources in a given area and tailor your EQ moves accordingly. The more sources you’re adjusting in a given range, the less EQ each is likely to need.

Finally, let’s look at the role of tonal balance in mastering.

How to use tonal balance in mastering

In the mastering stage of audio production, engineers compare the tonal balance of a reference track with their own work to help guide their EQ decisions. While this is a skill that can take years to hone, Tonal Balance Control can help you here as well. Just add an instance of the

Ozone Advanced

Adjusting the tonal balance of your master

The EQ moves we make in mastering are often subtler than those made in mixing, and the guidelines laid out above become doubly important here: use your ears, don’t aim for ruler flat, and remember that any EQ moves made during mastering have the potential to impact every element of your mix. Did I mention you should use your ears?

That said, as with your mix adjustments, the tonal balance curve can provide a great roadmap to guide you toward areas that may need some attention. In our example mix, we’re reinforcing some of the moves we made in the mix, but only by a decibel or two. Conveniently, the vertical scale of the EQ is adjusted so that we can see moves of half a decibel or less.

Adjusting master EQ in Tonal Balance Control

To give you a sense of the kind of difference these types of tonal balance adjustments can make across a mix and master, here’s an excerpt from “Debris” by Iris Lune, first flat, and then with our mix and master tonal balance adjustments.

Start mixing and mastering with Tonal Balance Control

As you can see, the ways in which Neutron, Ozone, Insight, and Tonal Balance Control all integrate together make for a powerful combination that can allow you to speed up your workflow, and provide guidance at the same time. Hopefully this has not only given you some ideas for new ways in which you can use Tonal Balance Control in your projects, but also demonstrated the impact tonal balance adjustments can make.