The Development of Low End Focus

Low End Focus in Ozone 9 helps you get the low end “right” in a mix or master. Learn about the development and rationale behind this helpful tool.

The low end problem

Getting the low end “right” in a mix or master can drive a music producer or engineer mad. We’re always fighting with room acoustics and our mental states as we struggle to create something that feels right.

Bass energy and spectral balance is something we can control reasonably well with EQs, using tools such as spectrograms and Tonal Balance Control to guide us. But since the low end is literally a moving target made up of transient (stochastic) and pitched energy, static EQ changes are not always ideal. Transient shaping tools, such as compressors and expanders, will usually leave behind artifacts that make them less than ideal for getting the low end shape we’re after, one that has the right balance between weight, impact, and clarity.

The focused solution

This inspired the development of the Low End Focus module in Ozone 9. We wanted to create a tool that would help navigate this particular challenge, one that did not leave behind the usual smeary artifacts that come with dynamic range processors.

Some of the inspiration for the tool actually came from a conversation about lossy codecs. The underlying principle of those codecs is that, if we identify the strongest frequency peaks in a window of audio, we can discard adjacent lower-amplitude peaks that would be masked by the stronger peaks, and therefore their absence will not be noticed while we get a reduced file size. Taking that notion of masking and applying some creative thinking, we flipped the concept on its head.

The premise

What if the peaks in the low band of the signal were similar enough in amplitude to cause partial masking or loudness loss, rendering the audio muddy or unclear? Could we devise a way to increase the contrast between these signals, so as to emphasize the highest-amplitude signals to gain clarity, or, by decreasing the contrast, add fullness to the overall sound by focusing the listener’s attention on the sustained and tonal signals — and yet do so in a way that does not leave noticeable artifacts?

The result is the Low End Focus module. We implemented two modes with different time constants, so the results would suit either the goal of increased transient strength or increased weight and sustain.

The idea of Low End Focus has an analogy in photography. When the camera’s aperture is increased, the depth of field is decreased, which blurs the objects that are far from the camera. Similarly, when the Contrast slider is increased in Low End Focus, the background objects in your track’s low end are fading away (decreased in amplitude). Or, conversely, when the Contrast is decreased, every detail — low or high — comes into focus, like a camera set to a narrow aperture and high depth of field.

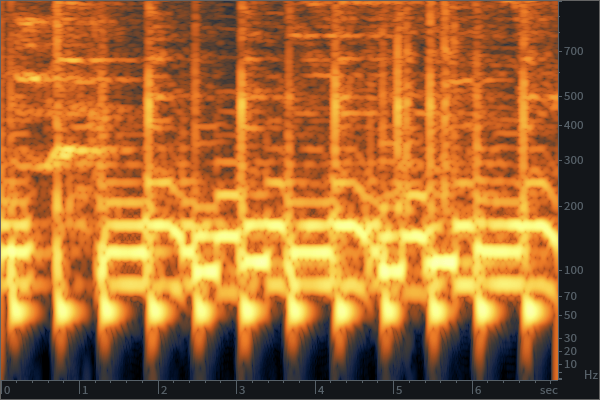

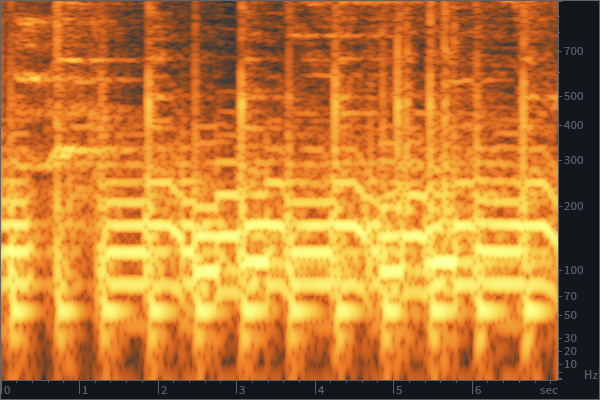

Sonically, this adjusts the amplitude ratio of low-level and high-level audio signals. When Contrast is increased, the lower-amplitude sounds get attenuated, so that the most important events can prevail and dominate the sound scene. This increases the clarity of your mix by reducing those less important sounds that often contribute to the muddy character. Conversely, when Contrast is decreased, all audio events get closer in level for a denser sound.

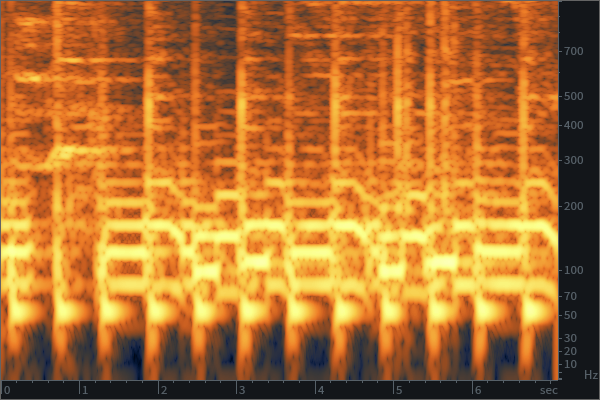

Original audio file

Audio with +50% contrast

Audio with +100% contrast

Audio with −50% contrast

Audio with −100% contrast

Such manipulation of low/high-level sounds may resemble dynamic range processing. So, is this effect similar to compression or expansion? Yes and no. A compressor works in the time domain with a certain amplitude threshold. The algorithm of Low End Focus classifies audio events based on their level relative to adjacent audio events, not the absolute threshold. And, what is more important, the algorithm works on dozens of low-frequency bands so that it can attenuate lower-level signals between harmonics of another, stronger signal.

On one hand, Low End Focus acts like an adaptive multiband compressor (when viewed in the time domain), but on the other hand (when viewed in the frequency domain), it acts like RX’s Deconstruct module in its ability to attenuate noise between signal harmonics.

It’s important to restate the obvious: getting the shape of your audio correct with minimal signal processing is preferred, but given the reality that we don’t always “get it right” all at once, the hope is that using a tool such as this permits users to make adjustments with fewer compromises to their sonic vision.