MPE: MIDI Polyphonic Expression Explained

MIDI Polyphonic Expression (MPE) is the next evolution of MIDI. In this article, we’ll cover its potential for creative expression and some MPE controllers.

Recently, we took a deep-dive into what MIDI data is and how it works. Continuing this conversation, we’re going to look at MPE data and the technology surrounding it. A rapidly growing number of manufacturers, developers, and users are leveraging the possibilities that MPE unlocks, so let’s learn what all the hype is about.

In this article, we’ll cover the potential that MIDI Polyphonic Expression brings to creative expression and some examples of MPE-enabled controllers.

MPE vs. MIDI

MPE stands for MIDI Polyphonic Expression, but has also been referred to as Multidimensional Polyphonic Expression. MPE uses MIDI data as its core language, but completely changes its approach to sending that data. The MPE protocol can be tough to wrap your head around, so let’s look at it in pieces:

The fundamental feature that makes MPE data more expressive than MIDI data is that it dedicates a channel for each individual note or voice an instrument is playing, instead of sending all the data on a single channel. Unlike normal MIDI, this creates the opportunity for per-note modulation.

Imagine you’re playing a MIDI keyboard controller with two mod wheels on the side: one dedicated to pitch bend, and the other for modulating another parameter. When you play a chord with your right hand and move the pitch bend wheel, all of the notes bend in the same direction up or down, and the same goes for the mod wheel. All notes are affected equally, making all sources of modulation global parameters.

When you’re playing an MPE-compatible controller or sending your computer MPE data, it sends data in a way that makes it possible for each individual note to bend or modulate independently. It’s like each finger is playing its own separate controller.

For example, the voice that plays the suspended fourth in a sus4 chord could bend down and resolve to the third, resulting in a diatonic triad by bending the pitch of just that one note.

Pitch bend is just one type of modulation; now imagine that each note can have its own simultaneous modulations. For instance, each note could have its own filter cutoff frequency, aftertouch messages giving each note its own amplitude variations, and so on. This makes for some more creative and expressive results than those that traditional MIDI and MIDI controllers offer.

Here’s an example of a synth modulated with MPE data:

That’s a lot of data!

Because of its ability to transmit and receive sophisticated expression signals, MPE generates a much larger amount of data than the traditional MIDI protocol. So how does all that data get sent around, and what are the repercussions?

Remember, MPE data assigns each played note its own data channel, instead of routing all MIDI data to a single device through a single channel. The multiple MPE channels are all still routed to one device, but they each control parameter modulations for a single voice.

MPE signal flow from controller to output

Channel 1 is reserved for global parameters: parameters that will affect all notes. This leaves 15 channels available. That means that you could play 15 notes at once, all with their own string of data.

Depending on how many notes you play at once and how many modulation sources you assign, your computer might not be able to process all that data in real time without CPU overload. Also, some DAWs and virtual instruments don’t receive MPE data, which complicates things. In order to make this all work, you need a controller which will let you send MPE, a DAW to pass that data along to devices you load in, and a device to receive those messages and output all the information as audio. Luckily, more and more affordable, MPE-compatible hardware and software is appearing on the market.

Editing MPE

When you open up a MIDI editor, you’re accustomed to editing MIDI notes and envelopes a certain way: pitch bend information is displayed as a single line that you can easily edit by clicking and/or drawing. MPE’s note-specific modulation(s) can make for some serious editing challenges in this scenario. How do you display pitch bend data for four simultaneous notes in one editor?

There have been a few attempts to do this, some more elegant than others. Perhaps the most slick is a little-known DAW called Waveform by a company called Tracktion.

MIDI is an amazingly powerful tool that gives us the opportunity to capture a performance, as opposed to a sound, and to edit it to our hearts content. In a sense, the impetus behind the development of MPE technology was to create greater expressive possibilities for capturing a sound, rather than an editable performance. As such, it’s a data-rich protocol, and has yet to cement its place in our current editing paradigm.

MPE controllers

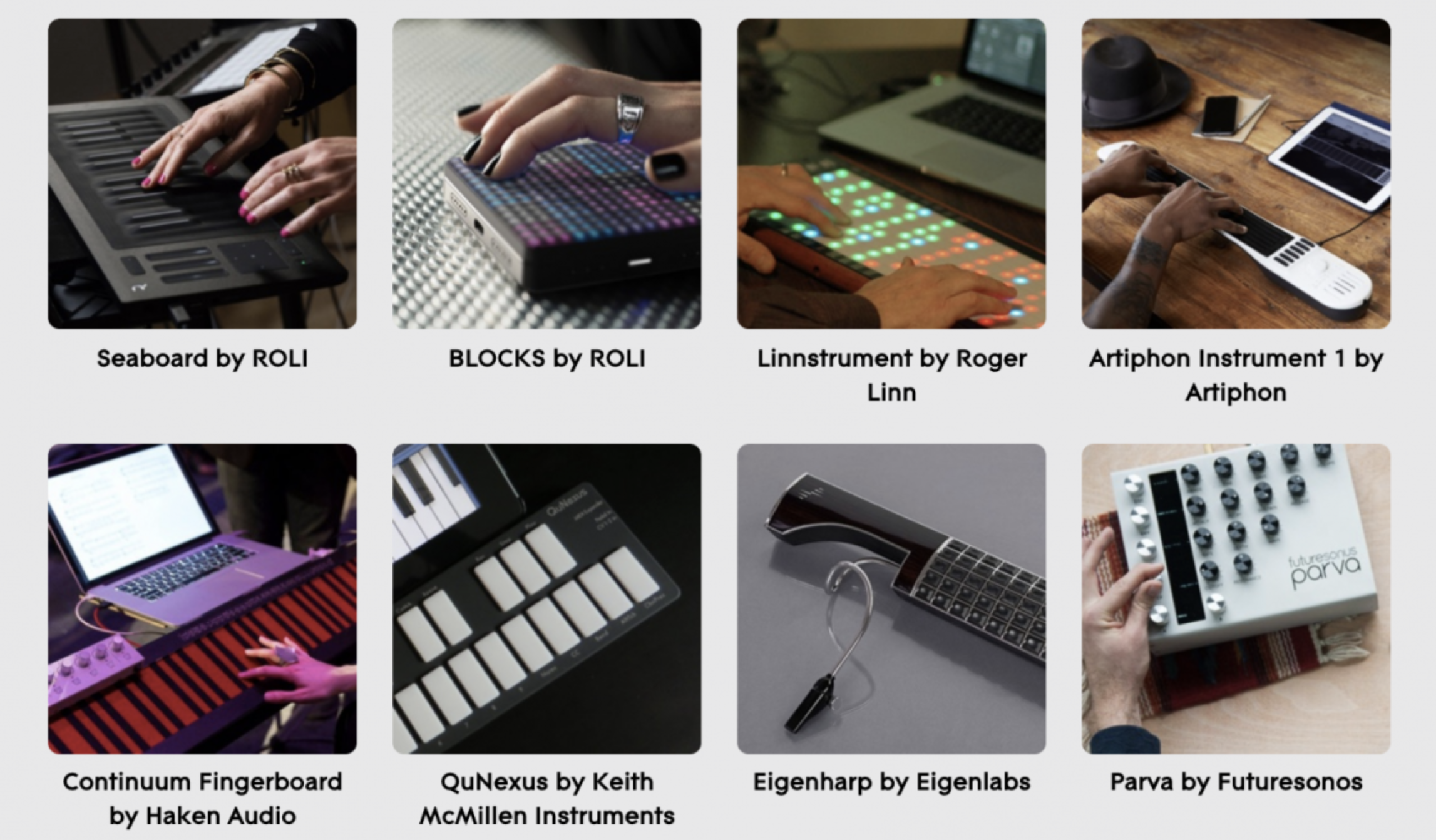

There are several MPE controllers available, ranging from something as small as ROLI’s Lightpad Block to something relatively humongous like the Continuum Fingerboard from Haken Audio. Additional robust, MPE-enabled keyboard controllers include the ROLI’s Seaboard series and the Osmose from Expressive E.

MPE controllers (image from The MIDI Association, leaders in MIDI technology)

Some MPE controllers are designed to emulate the experience of playing common instruments, such as INSTRUMENT 1 from Artiphon and the Eigenharp by Eigenlabs. There are also grid-based controllers like the Linnstrument from Roger Linn Design, and those that don’t really fit into any of these categories, like the Touché from Expressive E, the Joué Board, or the Sensel Morph.

Each of these controllers has its own distinguishing features, but the one thing they all do is track each of your fingers independently. The controller then sends data associated with each finger’s movements to their own MIDI channels. From there, the rest is up to your DAW, software synth, or other program that can receive MPE data.

Conclusion

MPE is a powerful protocol, and its creative potential is just now receiving just regard in the audio community. The MIDI spec hasn’t changed very much since the 80’s, so although the creation of MPE might seem like one small step, it’s quite the giant leap towards more expressive options for developers, manufacturers, and users.